Authorities are investigating a secondary school in the German-speaking part of the country, where students used artificial intelligence to create and circulate deepfake nude images of classmates.

The manipulated photos, made with freely accessible AI tools and shared on social media like Snapchat, appear to depict schoolmates undressed, though they are fake.

Local prosecutors have opened a juvenile investigation, and legal experts warn that creating or sharing such images could potentially fall under child pornography laws.

The case has sparked fresh debate about the risks of AI misuse among young people and the urgent need for better education and safeguards around digital technology.

Bacteria warning in lakes

Bacteria warning in lakes

Vengeron beach opens

Vengeron beach opens

Nyon family suffers frightening attack

Nyon family suffers frightening attack

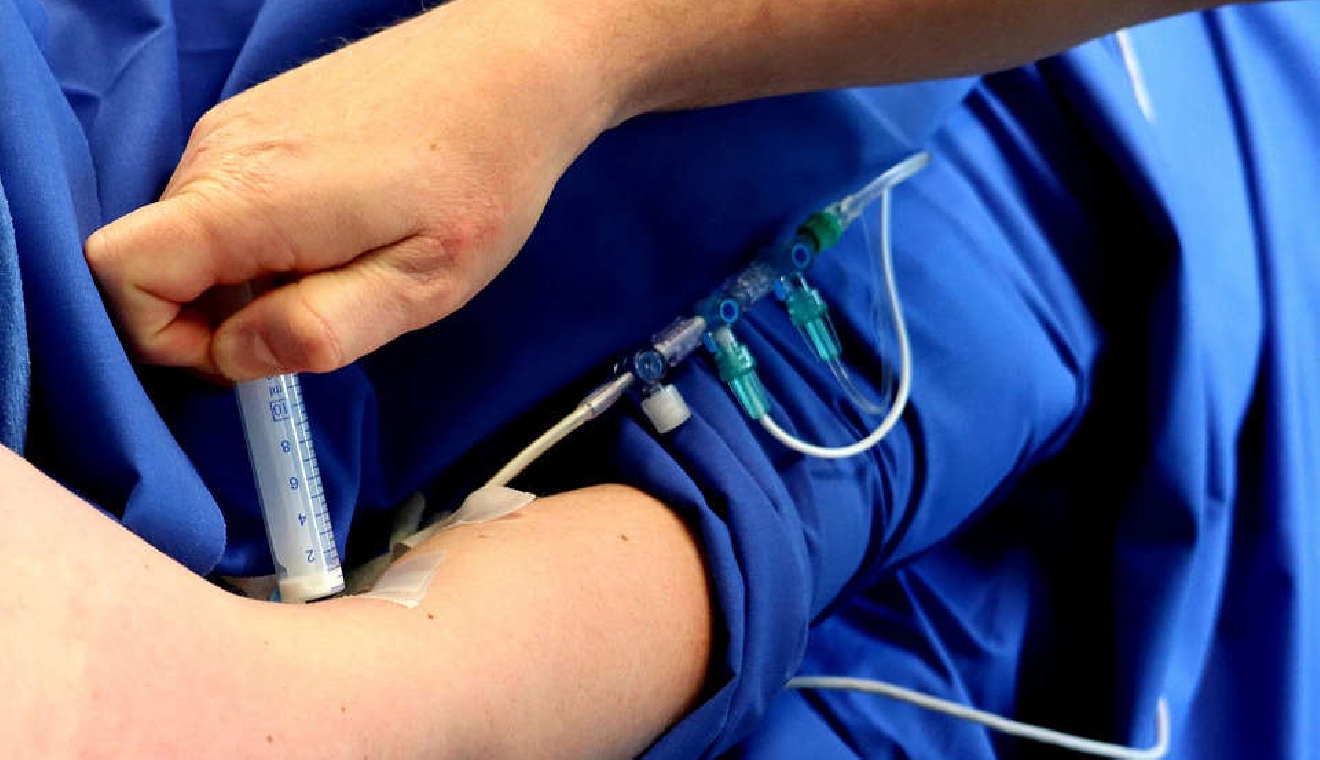

Lausanne at forefront on antibiotic resistance research

Lausanne at forefront on antibiotic resistance research

Luxury shoemaker ends Swiss production

Luxury shoemaker ends Swiss production

Speed camera vandal spared prison

Speed camera vandal spared prison